Blog

Trap development update

Our high catch rate trap has now been out in the wild in a few areas so it's time to give you a brief update.

Low predator interaction on tree mounted trap

Our customer set up the trap according to the manufacturer's instructions and had already caught one possum before the camera was put in front of it. Over the next three weeks the video shows 79 predators wandering past and zero catches.

The Sounds of Innovation

The Cacophony Project exists to put better tools in the hands of everyone engaged in the battle to make Aotearoa Predator Free. So when we hear DOC's Program Manager for Predator Free 2050 excited about our tools having the possibility to "really change the game", it helps confirm our belief that we're on the right journey.

Our masters intern publishes excellent project report

For a period a 3 months last year we had the pleasure of having Sapphire Hampshire join the project as an intern. Saphy was a Master's student in International Nature Conservation at Lincoln University, from Georg-August-Universität in Göttingen.

Our camera finds invading stoat within two nights

Our partners at 2040.co.nz have recently provided a Thermal Camera to the hard working folk at Auckland Council who manage the impressively predator-free Shakespear National Park.

Read all about how our technology is helping them tackle a stoat invasion here

Encouraging trap test results...

Our high interaction rate traps are out in the field catching predators at multiple sites. Today we share some early results and a short update on some of the improvements we're making as we learn more.

Making Predator Fences Active

Once an area has been cleared of predators, how can we defend it? The traditional answer has been static fences. Today we introduce a new concept - making those fences active.

More AI improvements

A group from Auckland University has been training AI models using our thermal video library.

We are very pleased with the results the team have achieved and we will be including the details of these models in our upcoming software updates for the Cacophony monitoring tools. We're confident this will help increase the accuracy of our classifications and thus reduce effort even further.

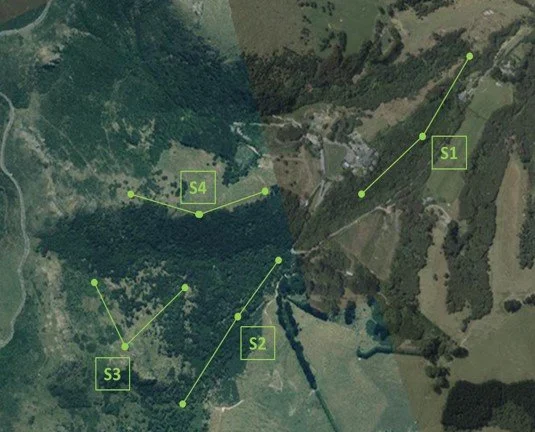

A protocol for monitoring with Thermal Cameras

“You can’t manage what you can’t measure.” Abbie Reynolds, CEO, Predator Free 2050

If you’ve ever been involved in installing and maintaining a trapping network, you’ve probably already spent hours (perhaps a few, perhaps dozens, perhaps hundreds) trying to measure the abundance of predators.

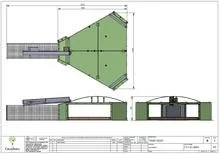

Predator Free 2050 Ltd fund our trap development

The Cacophony Project is pleased to confirm our agreement with Predator Free 2050 to fund the development of our Blind-Snap Model A Trap.

Training our Machine Vision for Wallabies

Earlier in the year we were asked if we could expand the machine vision used with our thermal camera to automatically detect wallabies with the goal of monitoring and controlling the wallaby population.

The Cacophony Project Strategy and Progress: A summary

To bring back the Cacophony of native fauna in NZ there are a number of separate parts of the puzzle that we are trying to solve. What follows is a summary of each sub-goal as we see it along with an indication of where we are up to with our progress.

Blind-snap - our first crack at a high interaction predator trap

This blog shows our first attempt at a device designed to trap hard to trap predators or re-invading predators.

Why improved interaction rate is the holy grail for trapping

Our camera experiments have shown consistently and across a number of different environments that, for an area that has been trapped for a while, there is a persistent population that avoids existing traps. Today we introduce a collection of approaches and ideas that we believe can actually improve the predator interaction rate and give us a real chance of achieving our predator-free goals.

Will improving the kill rate of existing traps make much of difference in our ability to eliminate predators?

Today we tackle the question of the kill rate of existing traps. The arsenal of traps available to trappers includes some well-designed, field-tested, and hardy workhorses. And yet we know that even the most skillful deployment of these in the field only delivers a level of suppression, not the total elimination we strive for. Today we discuss why that might be.

Will automatic baiting and long life lures make a difference to our ability to totally eliminate predators?

Part of the goal of this model was to help understand what strategies might make the biggest difference and thus improve the chance of total predator elimination. In this blog we discuss the predictions our model makes on the impact of auto-resetting traps.

We're hiring. Any awesome QA Engineers out there?

As you may have noted from our recent blog entries life is getting busy for us here at The Cacophony Project. And you know we love building solutions right? So, we've been building really quite a lot of software recently and we need some help making sure it makes it out of the door in the highest quality possible.

The news is spreading…

Regular readers of this blog will have noted our recent pivot in focus. Our team are busy working on our thermal screening device and the devices are already out at Beta testing sites helping employers keep their staff safe.